Each year, AT&T’s Field Operations (AFO) team responds to approximately 19 million calls requesting the location of its underground lines. 811 is the national Call Before You Dig (CBYD) phone number designed to help homeowners and contractors avoid fines, injuries, and service interruptions by unintentionally digging into an underground utility line. Verifying line and other asset locations is a complex, multi-step process, involving a combination of systems, tools, and departments to accurately identify customer location data, confirm the geolocation of AT&T underground assets, and dispatch field personnel to mark line locations.

My team and I recently had a chance to apply new technology to make the CBYD program much more effective. Through geospatial mapping, natural language processing of the plain English requests on trouble tickets, and AI image recognition on street map photos, AT&T can now identify almost instantly if we have cables buried at proposed worksites and determine whether a technician needs to drive out and physically mark the spots. This makes us more effective and, most importantly, reduces the chances of an accidental cable cut that could disrupt service to our customers.

The 19 million dig requests that AT&T receives originate from hundreds of construction and repair projects, from the build-out of new neighborhoods, when consumers repair their fences, and from civil projects like adding a new turn lane or sidewalk to a road.

A Growing Challenge

In the U.S., the Federal Communications Commission has mandated utility providers like AT&T provide buried asset location verification and marking services – at no cost to the caller and usually within two to three days. Any property owner or contractor who plans to dig is required by law to request an asset location by calling the national non- emergency 811 “Call Before You Dig” phone number or by visiting the states’ 811 websites. Based on these requests, providers must mark the approximate location of buried utilities with paint or flags to avoid anyone unintentionally digging into underground lines causing service disruptions, property damage or physical bodily harm.

AT&T is required to follow a rigid set of requirements for every single line-locate request, including:

- For emergency requests (e.g., repairs related to unexpected events for consumers or civic institutions), confirmation of receipt and dismissal or dispatch within 4 hours of creation.

- Dismissing requests outside of AT&T’s service areas or sending a team to mark lines in dig areas within 48 hours.

- Internally, AT&T has set a goal of two hours to confirm receipt and automatically push requests to vendors for subsequent review or markup.

While about 30 percent of line-locate requests require no AT&T intervention because job sites are outside AT&T’s service areas, an additional 30 – 35 percent of requests in the past triggered unnecessary Field dispatches. Inaccurate job site “dig box” markings and a lack of precise, dig-site images caused visits to sites where AT&T lines and assets were at no risk of dig damage. New home construction and increased connectivity needs mean that the volume of locate requests is growing. AT&T itself is playing a large role in the continued buildout of high-speed fiber. In the third quarter of 2023, AT&T had the ability to serve 20.7 million consumers and about 3.3 million business customer locations. The company is on track to pass 30 million+ fiber locations by the end of 2025.

Coupling the subject matter expertise of AFO technical staff and my team’s work, AT&T has filed patents that allow technicians and consumers alike to use augmented reality to simulate the placement of a new buried facility.

The Field Operations team continues to make substantial progress addressing these issues with statistical and rules-based automation. However, in late 2021, they aimed to further modernize the CBYD 811 process using machine learning (ML) and artificial intelligence (AI) technologies.

Data Science and AI Opportunity

Call Before You Dig uses a number of technologies that have been enhanced with AI and automation.

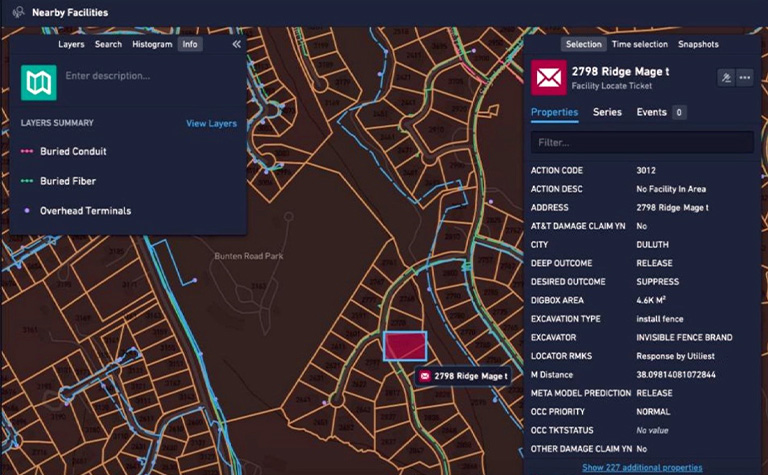

The first addition is a more granular geospatial recognition of both the land and the parcels of homes and the facilities (the buried cables, the fiber, et cetera), that are in the area and known to AT&T. Using a scalable hexagonal “cell” representation called H3, the indexing of geospatial locations and their contents were dramatically accelerated. Speedy index and retrieval is critical to meeting the 4-hour window for confirmation as required by emergency tickets. Additionally, the multi-resolution nature of the novel H3 tiles allows algorithms to analyze the impacts of a damaged facility near a dig box at the level of a street, block, neighborhood, and even wire center in a matter of seconds, a computation that previously took several minutes for each.

Screenshot of the Call Before You Dig mapping tool

The second improvement is the use of enhanced text analysis to better understand ticket data. Using natural language processing, we can analyze those tickets to match precise geographic locations to the written descriptions in the tickets. Even though the textual description contained in a dig request ticket is only a couple of sentences, AI algorithms can often detect the patterns that dispatchers and callers use to describe a dig box that may span an entire block, both sides of a street, or positionally indicate the location of an area within a customer’s parcel. This area of analysis started from keyword spotting, but with the proliferation of large language models (LLMs) such as those used in generative pre-trained transformers (GPTs), additional intents may be “read between the lines” to correlate digs within an area or other patterns that develop within a region.

A third data-based opportunity that my team investigated is the analysis of street view images. While street view images are sourced from capture data that is typically months or years old (how often do you see a van with four cameras and a LIDAR sensor drive by your home?), these images can provide validation for buried facility information that is used in geospatial analyses. We noticed how technicians inspecting tickets for dispatch or suppression would often head to popular map websites and perform this visual analysis themselves. While this effort falls into the class of an unsupervised computer vision (CV) machine learning (ML) task, the abundance of data and potential opportunity was too great to pass up. While automated analysis of street view images doesn’t work for every ticket the AFO team gets, it’s a great backup to help confirm a location.

Armed with this carefully curated data, my team developed several baseline component ML models to analyze the wide range of different data types. For reproducibility and simulation, the AI solution records and archives every aspect of each 811 CBYD cable request, providing a detailed audit trail for ongoing regulatory reporting and compliance. This automated workflow has created two unique capabilities previous unavailable:

- Highly granular cost structure control made possible by “tuning” the algorithm to state-, city-, or even neighborhood-based localities. This tuning allows a critical advantage over rule-based systems where statistically learned patterns can be identified for financial and performance impacts.

- Adjusting the algorithm by type of request (damage repair vs. damage avoidance), time of year, the ticket requester (or excavator), and size of the job. This allows the Field Operations team to evaluate springtime-based consumer home improvement digs differently than large infrastructure overhaul digs happening during inclement winter temperatures.

Overall, the automated systems we created to improve the detection and approval of when someone can dig and how to avoid cable cuts saves the company $13 million to $16 million a year. AT&T spends more than $250 million every year to support the Call Before You Dig infrastructure across the 21-state footprint that contains buried facilities. I was honored to be awarded the AT&T Science & Technology Medal for outstanding technical leadership in Machine Learning supporting Field Operations for my role on the CBYD project. The AT&T Science and Technology Medals are presented for achievements demonstrating remarkable technical depth or breadth and providing a unique and significant contribution to AT&T.

But it’s truly a team effort. Collaborating with all the people who helped make the CBYD project happen is a testament to our spirit of innovation.

Read more Technology & Innovation news